A data migration project is no easy feat and carries its own fair share of pitfalls and obstacles that you’ll need to overcome. Big or small, a poorly executed data migration can have far-reaching repercussions beyond its launch date and affect the day-to-day operations of your business. Data migration is a topic that we could talk about for hours and still barely scratch the surface, so I have contained the scope of this article to Salesforce migrations.

In this article, you’ll learn about tools, best practices, and common pitfalls. We’ll go over project planning, execution, proper testing, user acceptance, and how to execute a proper launch. Many of the points in this article, especially on tools and pitfalls, come from repeated personal experience. This is by no means an exhaustive list of everything you’ll encounter, so I have strived to highlight the most impactful and most common.

Planning for Your Migration

Perhaps one of the biggest factors in successful data migration is properly planning your migration. In most companies, your data SME may have some knowledge into how to execute an extract, transform, load (ETL) process, but these types of migrations are typically on a smaller scale and are largely one-off scenarios. Although many data SMEs, or those who don’t have an understanding of the ETL process, may advise that small-project solutions can scale to larger projects, this is definitely not the case. As your data grows in size, and you add more source-to-target pairings, the complexity of the migration grows as well.

As with many other types of projects, the planning of a data project combines the elements of project management, business analysis, technical analysis, and more. Unlike other projects, however, you typically find your data migration expert(s) wearing all of these hats. The simple truth is that many of the questions we might ask based on conversations with project stakeholders require a specific mindset or approach to problems. Identifying the true scope of the effort, the needs, and the necessary timeline; translating questions and deliverables to non-data stakeholders; and a vast array of other tasks typically fall to the data experts who, based on their previous knowledge and experience, are able to juggle all of these moving parts.

Asking the Right Questions

The first thing any good data migration expert should do is ask the correct questions. I’ve assembled a starter list, but this is by no means an exhaustive list. These questions, and the answers derived from them, lay the foundation for further questioning. Providing ample upfront time for data discovery can minimize the chance of problems occurring later on in the project.

- Who owns the data?

- Where does my source data live?

- Do I have access to my data?

- What format is my data in?

- Do I need to cleanse or deduplicate my data?

- How much data do I have?

- What data do I truly need?

- What is the target production date?

Although most of these questions seem fairly straightforward, they can introduce a complexity all their own. As you start to identify who within the company can answer these questions, and even pose the questions to those individuals, you may start to get feedback or unexpected responses to your questions. Let’s look at a few example scenarios.

As you begin to inquire about where data lives, you will potentially start to identify multiple data owners. If you own the data yourself, or your company has a very clear authoritative figure who can provide overriding directives, then you can stop there. However, if this is not the case, then you can start to draw closer to the owners of the data. Approaching those individuals, especially if they’re unaware of the project, may raise questions. “Why do you need access to my data? Who will see it? Who will own it once it is moved?” All these questions, and more, could be based on a reluctance to let go of their ownership or an old-fashioned fear for job security.

Let’s say you’ve identified where the source data is and you’ve even figured out if and how you will access that data. The next concern is the format of the data being migrated. In my experience, the two best methods for moving data are to directly access the legacy database through the establishment of linked servers or to restore a backup of the database. However, you might be given a stack of CSV files, and if this is the medium of your data transfer, you should be prepared to closely evaluate your timeline to ensure you have adequate time to perform the migration by the target date.

You can quickly begin to see how just a few questions can quickly explode into a series of if-then scenarios and follow-up questions. The answer to each question could affect your timeline, the tools you’ll use, the people involved, and even who should be able to see the data during the migration process (think HIPAA or PII, to name just two of the many data restrictions imposed by internal corporate and governmental entities). This is why the discovery and planning phases of your project are so critical to a successful ETL project.

A Realistic Timeline Leads to a Successful Deployment

Let’s be honest with ourselves…data is hard. If it wasn’t, then there wouldn’t be untold numbers of data consulting firms dedicated to just that. However, many people still underestimate the value and complexity of data migration. Even seasoned experts can sometimes underestimate the real scope of data migration, and I am guilty of this myself. So what does a realistic timeline look like? To be brief, that depends on the team you have and the process, or processes, that you follow.

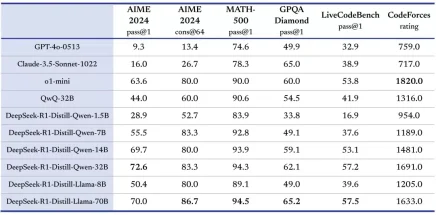

The first step in setting yourself up for a successful go-live date is to evaluate your data source. I typically see many migration consultants evaluate this based on the number of records. To a degree, and for a few reasons, this is a valuable metric. However, when you get into larger datasets, you might find that the difference between 1 million records and 1.25 million records only results in a handful of minutes. In fact, when I size a Salesforce migration, I start out at a scale of 100,000 batches and, once I hit 1 million, modify my scale to 500,000. Take a look at the below table on the Bulkapi and the Bulkapi2:

| Type | 500 Rows | 5,000 Rows | 25,000 Rows | 250,000 Rows |

| Bulkapi | 0:18 | 0:46 | 2:12 | 17:08 |

| Bulkapi2 | 0:06 | 0:35 | 1:06 | 2:37 |

Table data: DBAmp Documentation

As you can see, a much larger variance in data would be needed to have an effect on your timeline. If anything, a large dataset is going to have the most impact on your blackout window for go-live (how long it takes to turn off Production and move data into it). So what can lead to a successful timeline? Simply put, tables of data (different than the size of the data set) and adequate resources.

Tables of data typically get underestimated in favor of data sets or even the number of objects you are migrating data into. Not to undermine the importance of those, but looking at data sources and how many tables are in the sources you are moving is much more crucial to planning an accurate timeline. Given the following, you have two legacy systems that are both moving to Salesforce. In Salesforce, you’ve identified a total of 12 objects that will be populated with historical data prior to go-live. Being the savvy data expert that you are, you identify 12 migration scripts that need to be created. You plan, you run calculations and numbers, and you build your timeline in large part around how long it will take you to map and build those 12 scripts. Then you get to the part of the project where you actually perform the work and realize that a table in each legacy system needs to move to Account…you’ve just gone from 12 to 13. Not a big deal, you say, because you built a little extra time into your migration. Then the same thing happens for Contacts, Opportunities, Cases, Orders, Products, and that weird custom object the business needed that has a number of complexities to it.

Let’s say you are wise, however, and planned your migration according to data sets and the number of source tables (CSV files, Excel files, etc.). All is right in the world. You will be moving 2 million records over the course of 11 weeks and pushing 20 tables of data into 13 different objects. All seems right until you’re told that you’re the only migration consultant performing the work. Not to worry; you have confidence in your abilities and you accept it with a smile. After all, how hard can it be? Yes, how hard could it possibly be for a single person to gather requirements around data, map every single field, gather transformation and filter requirements, create each script, push sample sets of data, run a quality analysis, resolve issues, address unexpected requirements that come up last minute, and stay organized enough to repeat your success in user acceptance testing (UAT) and Production? This, my friends, is the reality of the development cycle.

Don’t Underestimate the Development Cycle

The development cycle is a very crucial stage in a successful migration. I cannot count the number of times I have seen a project fail, or go horribly off track because the development cycle was not given its due consideration. As data experts, we have a tendency to think that we’re infallible and, because of this mindset, we analyze the need, write the scripts, and tell the team we’re ready to move to Production four months ahead of the timeline.

What does a good utilization of the development cycle look like? Test, test, and test again. I cannot stress enough the importance of getting requirements and mapping signoff, testing your transfer scripts, getting signoff of the migrated data, and testing again. It may sound like a lot of extra work, especially given that you have to implement a method to pull data out of the system so you can simply push it back in, but the less time you spend testing, the greater chances of failure.

A Successful UAT Is Critical to User Adoption

Just as the development cycle is important for putting your scripts to the test, UAT is important for ensuring that you’ve managed to capture every single requirement that will be needed on Day 1. UAT is just that…the people who will be using the system actually logging in and testing the functionality and data for accuracy and functionality. Every project that has failed after launch, meaning the migration went smoothly but problems started to arise after Day 1, could almost always point back to a key user who was left out of the UAT process (or simply did not choose to participate).

I can guarantee that, in almost every instance, your team has a “Susan” who sits in her cube and is a rock star at what she does. She’s an expert in her field, others in the company call her the Oracle, and she almost never calls in sick or goes on vacation. Susan is amazing at her job and really turns the wheels for the business in the current system. Susan has developed business processes that only she knows and has never needed to teach anyone else because the work ends up on her desk.

At Day 1 in the new system, Susan realizes her business processes no longer work with the new way the company does things. She also realizes there was an opportunity to roll some of her processes into the core system, alleviating much manual effort and making it easier for her to share the work with her peers. Even worse, Susan finds out you left critical data or measurements behind that she keeps in Excel spreadsheets, and now your sales calculations will no longer be accurate.

Dress Rehearsals Are for More than the Runway

You may laugh at the title of this one, but a dress rehearsal is so important. If you’re new to the world of deployments, a dress rehearsal is just another way of referring to a mock go-live, which will give you a very real idea of what things will be like when you are really moving to Production. To give you a true picture of D-Day, you’ll want to involve the whole project team. Did they create a process for copying your migration user profile/permissions from one org to the other? Will all the custom objects and fields exist in the new system? What about picklist values and any new triggers or workflows?

It may seem like a big investment to take a fresh Full sandbox and do an end-to-end run into it, but the investment pays off when you consider that you’ll shake out any issues that might crop up at Production during this special time of migration. A project has so many moving parts that affect the outcome, many of which you may have no knowledge or control over, and they can really hurt your planned timetable at project launch if you don’t prove them out early on. Just as a car manufacturer wouldn’t let a car hit the dealership until they put it through a series of tests at the factory, you shouldn’t let your data hit the Production system until it’s been vetted against the whole process.

Production Migration: Big Bang vs. Delta vs. Phased

Ah, the question of questions, one that can take a project from 3 months to 5 months: How will we load the data to the new system?

The traditional approach for moving data, and the approach that we make assumptions on in every section of this article, is a “big bang” approach. This means that, as an example, you’ll stop doing business at 5 pm on a Friday, data will be handed off to you after all the systems true up, you’ll do the data migration through the weekend, and then you’ll turn on the systems on Monday morning.

Tools for Success

A successful data migration is the sum total of all its parts. This means giving consideration to which tools you’ll use. When I was in AP Chemistry in high school, my instructor used a word that I believe applies here. It’s a French word that has stuck with me for 17 years: accouterments. In the simplest terms, the most common given definition is “the right tools for the job.” That’s how we evaluate the tools we will use for data migration. We don’t simply grab any and every tool associated with data or ETL. No, we evaluate and select the right tool, or tools, for our specific migration. We also recognize that, even though we might do multiple Salesforce migrations over our careers, each migration might require different tools. Therefore, we evaluate our toolset every single time.

That said, below are some standard tools or assets I typically use across every single Salesforce migration. After all, being repeatable means repeated success if I have planned correctly.

Storage Calculator

Storage calculation is perhaps one of the most critical things you’ll do at the beginning of a Salesforce ETL project. Why? The answer is quite simple. Your Salesforce org has a limited amount of data available for storage and increasing that storage, which costs additional money, can sometimes involve multiple iterations of conversations directly with Salesforce around terms, amounts, and pricing. If you wait until the day the project is going to launch to have this discussion or find out during the Production migration you don’t have enough storage, you will most definitely have to delay your launch date.

Calculating storage isn’t as simple as measuring the size of your existing database and then looking at the total available space in your org. First, you‘ll want to consider data growth as you move beyond the launch date and start using Salesforce to drive your day-to-day business. If you max out your data storage at Production, you’ll have no room for accepting new opportunities, as an example, on Day 1 in the new system. Talk about poor user experience.

The second point to consider is that all data is not created equal in Salesforce. Object storage is different than big object storage, and both are different from file storage. Even objects have some odd differences. As I’m sure you probably know, by now, each record in Salesforce is 2KB in size. Did you know, however, that a person account actually uses 4KB, as it creates both an account and contact record that are seamlessly tied together? Knowledge articles also require more storage than a simple account record. There are a handful of these instances that you’ll want to be aware of.

In addition, consider source data that duplicates as it goes into Salesforce. You may have an instance where you want to implement a subscription model. This data is fed by your historical orders, where your current subscription model isn’t the best, and you measure only the size of your historical orders. In Salesforce, you start to realize that each line item on an order is a record in Salesforce. You also need to generate a subscription record to generate future invoices on the fly. To support any reporting, you need to determine how many times that subscription would have “fired” in your old system, had it supported such functionality, and then cloned that order multiple times to build out the historical view of the subscriptions.

Finally, now that you know roughly how to calculate your storage, you need to actually build a calculator. You can do this in any number of ways, but I use an Excel document with a built-in formula to help me with these calculations. Instead of entering the size of the data, I am moving in terms of bytes (KB, GB, etc.), I enter how many records are going to each target object. The formula is set up to determine how big a record will be needed in Salesforce for each object (typically 2KB, but remember the oddities in a few objects), and a separate formula is set up to determine the sizes of all the files I’ll be moving (measured in bytes). Once those calculations are run and totaled in their individual fields based on the storage medium used in Salesforce, I have a percentage calculation for how much growth I need to make room for (remember, your data is going to continue growing while the business continues in the legacy system).

Mapping Document

The mapping document is a simple Excel spreadsheet that enables me to map data from source to target. I typically lay out the mapping so that all my target fields are on the left-hand side and include relevant columns to define useful information about the target fields (label, API name, data type, picklist values, transformation logic, etc.). The sheet is bisected by a column indicating whether or not we will migrate data to that target field (a simple Y/N selection) and then the right-hand side is everything we know about the source data (table name, field name, join criteria, data type, etc.).

The more detail you have in your mapping document, the more successful your migration will be. However, including more detail also complicates the mapping process and results in longer run times, as you have to fill in more information that is being asked for. If you’re the data SME for the project and are well versed in both your legacy data and the data definitions within Salesforce, you can afford to include less detail here and rely on your tribal knowledge. This does mean, however, it will be harder for anyone to step in and pick up your work, or work alongside you, and it isn’t ideal if you’re working with a third-party firm to migrate the data.

Deployment Checklist

The deployment checklist is what I consider to be the most critical tool to the project when it comes to sharing information on the process with stakeholders. My checklist includes sections for pre-migration, the actual migration, and post-migration steps. Pre-migration steps are anything I do to prepare the data to be moved (such as uploading a fresh copy to SQL Server), any validation rules or triggers I might want to disable (or enable in rare instances), and anything else I want to be done before I move data. This can get very granular, especially when you’re disabling or enabling logic in your org, as you will want to list each of those out, but it truly does pay off in the long run.

The migration steps are just that. These include each script I execute, in the order in which they should be executed, to move data from staging to Salesforce. This section is fairly simple; you just want to include each step of moving data.

Post-migration is very similar to pre-migration. The most similar thing is to list each piece of logic or automation that you disabled in pre-migration as an enable in post-migration (or the mirror, depending on what you did in pre-migration). Additionally, you would include any other things you might do after you move data that should never be missed (verify the top 40 accounts, run a report to determine records that were not inserted due to errors, etc.).

In addition to the steps from start to finish, I also track the time it took each step to run. This is critical because it allows me to more accurately estimate how long the blackout window will be at launch when migrating data to Production. I follow this checklist with every migration I do, whether a partial or full migration, regardless of the target (dev, QA, UAT, Production). You’ll find that, as you go through the steps over and over again, you’ll get more efficient at the execution. You’ll also be able to quickly identify your problem areas and can focus on improving the runtime of those steps so that you’re always tightening up your window as you move to Production.

DBAmp

DBAmp is a tool you probably haven’t heard of before unless you’re very familiar with the data-world of Salesforce. Even then, a number of tools are similar to DBAmp, but none I feel is as capable as DBAmp. Its team is always improving the tool’s existing functionality, and it has greatly evolved over the years.

DBAmp acts as a data source connector within SQL Server and enables you to set up a linked server that connects directly to Salesforce, or any sandboxes, for the purpose of moving data. This allows you to execute prebuilt stored procedures in SQL that can move data through the Salesforce APIs without requiring any knowledge other than SQL and an understanding of the stored procedures. The company does a fantastic job of documenting tool usage, including providing many good examples of real-world scenarios, which makes it fast and easy to learn.

Data Migration vs. Data Integration

Before ending the article, I feel I should mention that data migration and data integration should be viewed (and treated) very differently. Data migration may contain some of the same elements of an integration but with two distinct differences: Some of the rules for migration may be slightly different for the data coming in than the rules being used for the data that will continue to feed the system moving forward, and migration is a one-time effort.

When performing an integration, you’ll want to consider the timetable an integration will run on, push versus scheduled integrations, which tools should be used to support a more permanent architecture, and a number of other things that I do not claim to have any expertise in and only some exposure to.

Conclusion

Hopefully, this article has been informative and helpful in providing you, or your team, with a clear roadmap of what a data migration looks like. Having an understanding of what to expect, and what pitfalls to avoid, is critical to project success. I covered a lot of topics, but it is my hope to not only arm you to be successful but to also convey the magnitude of a data migration project.

To reiterate, this article is by no means an exhaustive list of every tool, pitfall, or best practice related to data migrations. The topics covered here are specific to Salesforce and cover some of the most common of the aforementioned areas. Share some of your tips, tricks, or tools in the comments.